Banks and financial platforms are no longer using software simply to analyze financial activity. Increasingly, they are allowing systems to act on it.

Loans are approved automatically. Transactions are blocked before authorization. Liquidity moves between accounts without human initiation. Risk exposure adjusts continuously rather than periodically.

A recommendation system informs judgment, and a decision system executes it.

Financial judgment, historically exercised by individuals, is being encoded into institutional behavior through software.

From Analytical Models to Operational Decision Systems

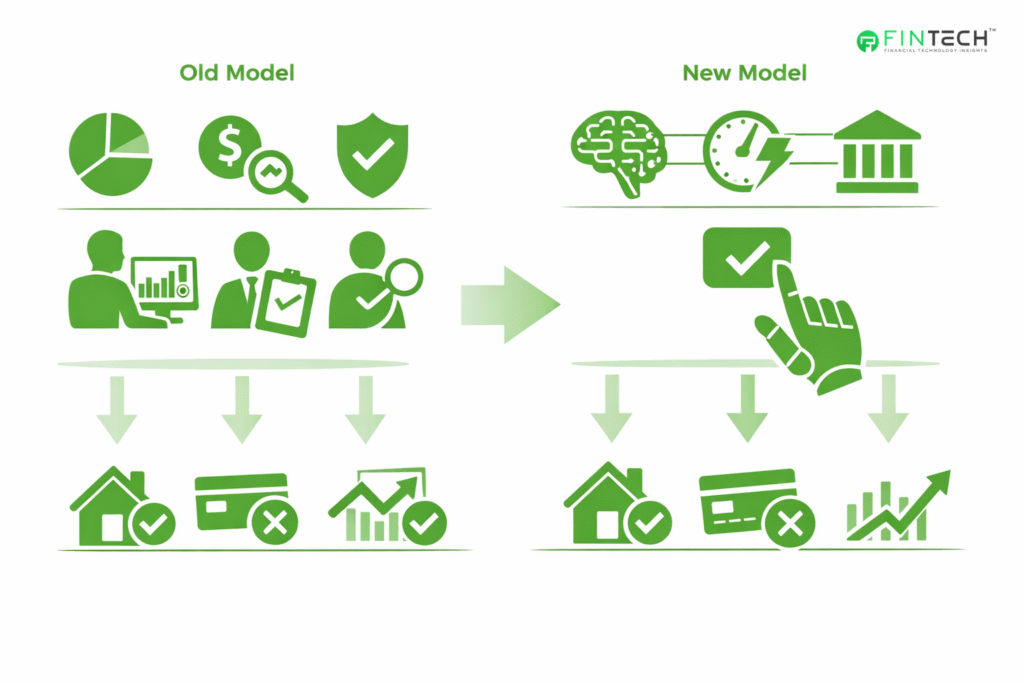

Financial services have always relied on quantitative models. Credit scoring, portfolio optimization, actuarial pricing, and fraud analytics are decades old.

Traditionally, those models supported people. They ranked, flagged, or recommended. A human still approved the mortgage, froze the account, or authorized the trade.

That operating model no longer aligns with financial infrastructure.

Payment networks, digital banking platforms, and embedded finance environments operate in real time. Authorization windows are measured in milliseconds. Human review is operationally impossible. A transaction cannot wait for committee approval at checkout.

Fraud prevention illustrates the shift clearly. Visa disclosed in its 2024 risk report that its AI-driven monitoring analyzes hundreds of billions of transactions annually and prevents tens of billions of dollars in fraud each year. Individual decisions are never reviewed by analysts. The software decides instantly.

Credit underwriting is evolving in the same way. U.S. supervisory guidance from the Consumer Financial Protection Bureau has documented increasing use of machine learning models trained on behavioral and transactional data rather than solely traditional bureau scores.

The approval decision often occurs automatically at application submission.

The operational difference is fundamental: Previously, models informed decisions. Now, models are the decisions.

Institutions are no longer deploying analytics tools. They are deploying delegated authority.

Real-Time Finance Eliminates Human Review Cycles

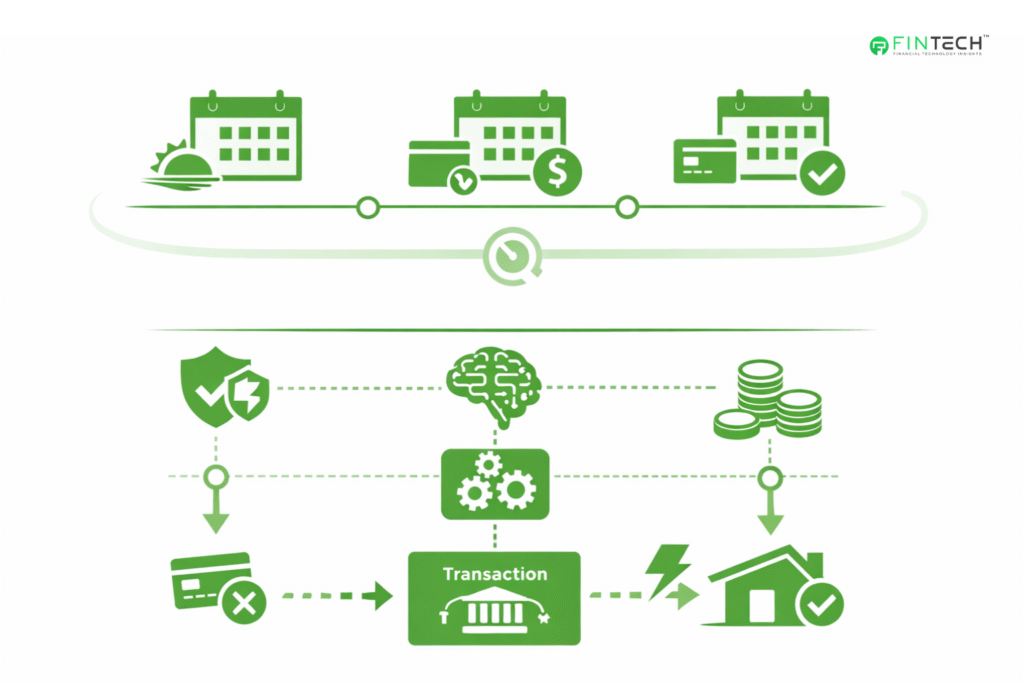

Finance historically operated on cycles. End-of-day settlement. Monthly billing. Quarterly risk review.

Real-time payments and open banking removed those buffers.

- Fraud detection must occur before authorization.

- Credit risk must be evaluated at the application.

- Liquidity must be positioned before payment initiation.

As a result, machine learning models are now embedded inside transaction processing infrastructure rather than analytics environments.

In practical terms, a model failure can now become an operational outage.

Financial risk has expanded beyond credit, market, and liquidity categories. Institutions must now manage decision infrastructure risk. If the decision engine fails, operations fail.

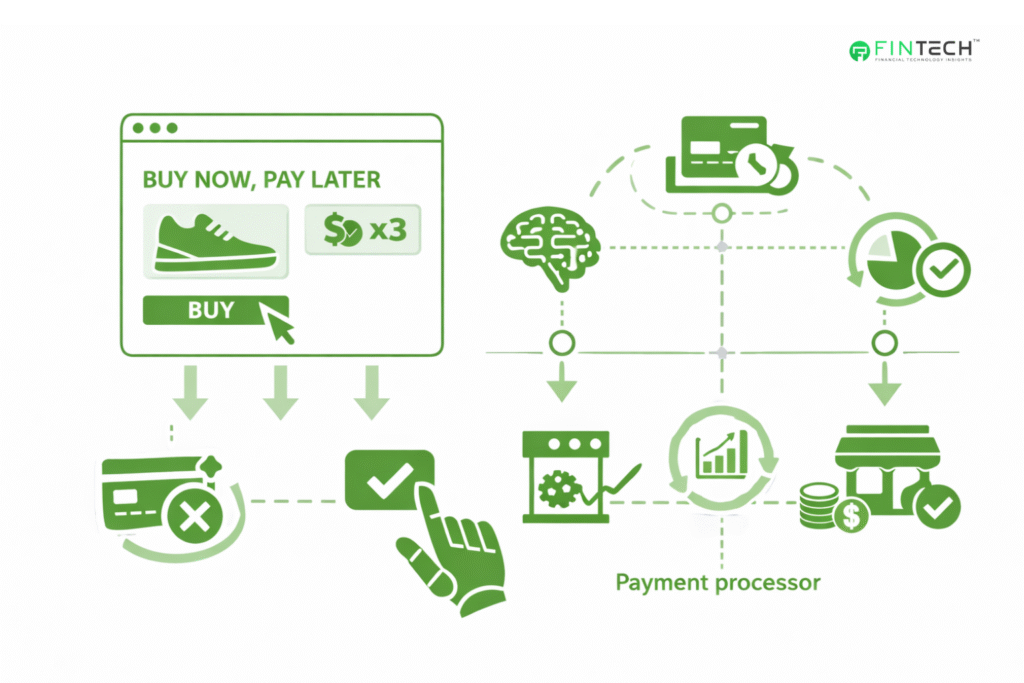

Fintech Economics Depend on Autonomous Decisions

Fintech companies did not adopt automated decisioning primarily for innovation. They adopted it for survival.

A digital lender cannot manually underwrite at scale. A neobank cannot staff a manual fraud review for every card swipe. Embedded finance providers operate inside partner platforms where approvals must occur during checkout.

Unit economics require immediate evaluation of risk and eligibility.

Buy Now Pay Later (BNPL) platforms provide a clear example. Approval decisions must occur while the consumer is still on a merchant page.

Public filings from providers describe underwriting models using transaction context and behavioral signals to determine installment eligibility in real time rather than through traditional application review.

Similarly, revenue-based financing and merchant cash advance providers analyze payment processor feeds to continuously evaluate repayment capacity. The financial product is not a loan application. It is a continuously monitored exposure.

This produces a strategic insight that many traditional institutions underestimate.

The competitive advantage in modern finance is not user interface design. It is decision latency.

The institution that evaluates risk fastest gains distribution. The one that cannot operate at transaction speed gradually loses it.

Regulation Is Catching Up to Algorithmic Judgment

Financial regulation historically focused on capital adequacy, conduct, and disclosure. Autonomous decision systems introduce a different oversight challenge.

Credit denials must still be explained to consumers under U.S. lending law. Account freezes affect access to funds. AML monitoring influences law enforcement processes. A machine decision carries legal consequences.

Regulators have therefore begun shifting from supervising models to supervising behavior.

The CFPB has clarified that lenders using complex algorithms must still provide specific reasons for adverse credit decisions. Black-box reasoning is insufficient. Institutions must understand why the system acted, not merely that it acted.

At the same time, the European Union’s AI Act classifies creditworthiness assessment and insurance pricing as high-risk AI applications, requiring documentation, human oversight procedures, and monitoring for discriminatory outcomes.

The most predictive models are often the least interpretable.

Executives increasingly manage a trade-off between performance and explainability. Some institutions deploy parallel governance approaches, combining high-performance predictive models with constrained explanatory frameworks for compliance and auditability.

Regulators are no longer reviewing financial products alone. They are reviewing institutional decision logic. Software behavior has become a regulatory subject.

Bias, Data Quality, and Economic Stability

Concerns about bias are frequently discussed in ethical terms. In practice, they are operational and financial concerns.

Studies by the Federal Reserve Bank of New York and supervisory agencies indicate that alternative data and machine learning underwriting can expand credit access to thin-file consumers.

At the same time, correlated variables may unintentionally encode socioeconomic disparities depending on training data selection.

The technology itself is not inherently biased. The dataset is.

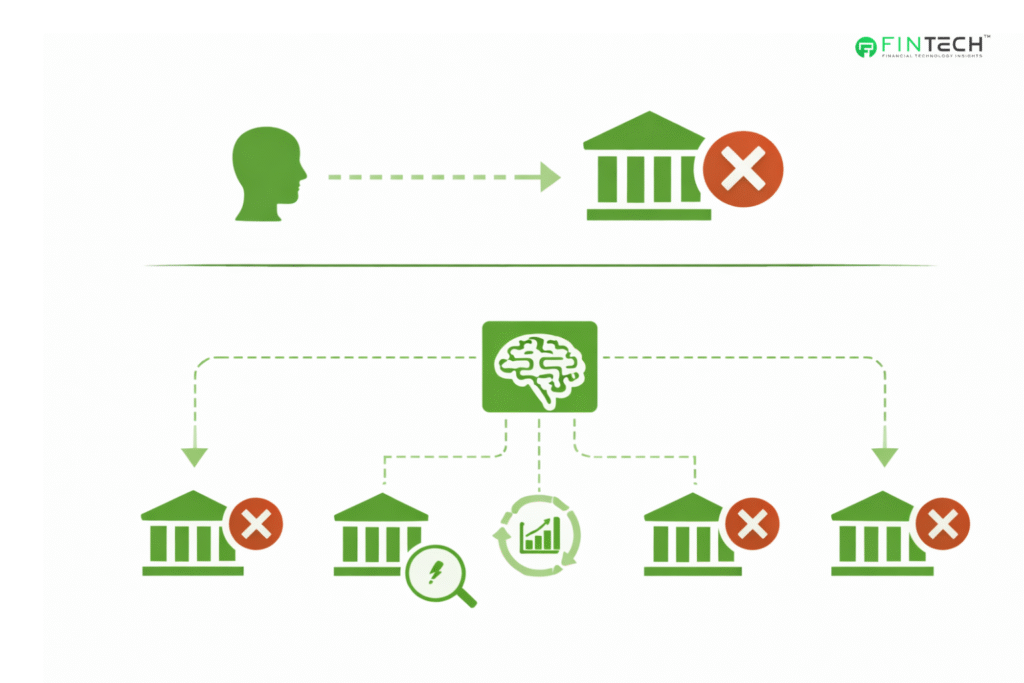

This matters for stability as much as fairness. Models trained on stable economic periods can degrade quickly when conditions shift.

During the 2022-2023 interest rate environment, institutions reported model drift as consumer spending patterns and repayment behavior changed.

A human underwriter adapts intuitively. A model adapts only when retrained or governed.

Automated decision systems, therefore, introduce a new operational dependency. Institutions must continuously monitor data distributions, not just financial exposure.

A flawed dataset can originate poor loans faster than any manual process ever could.

Bias risk and credit risk are becoming partially the same category.

Operational Risk Becomes Financial Risk

The most underappreciated consequence of automated financial decisioning is scale.

A mistaken human decision affects one account. However, a mistaken system decision affects thousands simultaneously.

A fraud model false positive can block legitimate customer transactions across regions. An underwriting calibration error can originate in low-quality portfolios rapidly. An AML tuning change can overwhelm the investigation capacity overnight.

The BIS has warned that concentration risk may emerge if institutions rely on similar third-party AI vendors or training datasets. A shared modeling flaw could propagate across multiple organizations at once.

This resembles systemic financial risk but originates in technology architecture rather than market exposure.

Vendor management, therefore, changes materially. Model governance becomes part of enterprise risk management and operational resilience, not merely audit compliance. Financial institutions are effectively supervising decision software the way regulators supervise them.

Human Judgment Moves Upstream

Automation does not remove human judgment. It relocates it.

Financial professionals are no longer reviewing individual transactions. They are defining the policies under which systems act.

Risk committees approve model thresholds. Compliance teams audit training data lineage. Treasury leaders monitor automated liquidity allocation rules.

Senior leadership must therefore understand not just financial indicators but model behavior concepts such as distribution shift, explainability constraints, and monitoring triggers.

Supervisory observations from central banks increasingly emphasize that effective governance depends on whether senior management can challenge model outcomes rather than rely solely on vendor documentation.

Oversight is no longer transaction-level. It is framework-level. Executives are governing decision systems, not decisions.

Industry Examples

Card networks use AI risk scoring to evaluate transaction legitimacy before authorization. Large issuers dynamically adjust credit exposure using updated customer data rather than periodic review cycles.

Corporate treasury platforms automatically optimize cash positioning across accounts to balance liquidity and yield.

Payment platforms continuously evaluate merchants for fraud and chargeback risk.

The institution retains responsibility, but the moment of judgment is now embedded in software operations rather than human workflow.

The Strategic Implication for Financial Leaders

Autonomous decisioning turns artificial intelligence into a governance issue rather than a technology initiative.

Three executive questions now matter:

What authority has the organization delegated to systems? How is accountability retained?

What controls exist when behavior deviates from expectations?

Many institutions still apply legacy model risk frameworks designed for statistical analysis tools. Those frameworks assumed human approval remained in the loop. That assumption no longer holds.

The operational reality is straightforward. A probability model estimates risk. A decision system executes consequences.

Once execution occurs automatically, AI becomes part of fiduciary responsibility.

Conclusion

Financial services are evolving into a continuously operating decision environment. The conversation often focuses on efficiency or customer experience improvements. Those are secondary effects. The primary change is institutional.

Financial judgment is increasingly expressed through algorithms acting at machine speed across millions of events. Faster than review committees, audit cycles and regulatory reporting processes.

Organizations that treat these systems as software deployments will struggle to govern them. Organizations that treat them as controlled decision authorities will adapt.

Software is not replacing financial leadership. It is operationalizing it.

When a system approves a loan, blocks a payment, or reallocates liquidity, the institution remains accountable. The only change is that the decision is executed continuously rather than individually.

And that transforms financial management from supervising people making decisions to supervising decisions-making themselves.

FAQs

1. How are banks actually using AI to make financial decisions today?

Financial institutions embed machine-learning models directly into transaction workflows. Systems now approve or decline payments, set credit limits, prioritize fraud alerts, adjust liquidity positions, and screen AML activity in real time. The key shift is execution.

2. What risks do automated financial decision systems introduce for executives?

The primary risk is scaled error. A human mistake affects one customer. A system mistake affects thousands simultaneously. Model drift, biased training data, or incorrect thresholds can generate false fraud blocks, poor-quality lending portfolios, or regulatory violations.

3. How do regulators view AI-driven credit and payment decisions?

Regulators treat them as accountable institutional behavior. U.S. agencies require lenders to explain credit denials even when complex algorithms are used, and supervisors increasingly expect auditability, documentation, and monitoring of automated systems.

4. Why is real-time decisioning becoming a competitive advantage in fintech?

Modern finance operates at transaction speed. Payments settle instantly, and credit approvals happen at checkout. The provider that evaluates risk faster can safely approve more transactions and capture more revenue.

5. What role do human executives still play if software makes the decisions?

Human judgment shifts from approving transactions to governing decision policy. Leaders set risk tolerance, model thresholds, escalation procedures, and monitoring rules.

To participate in our interviews, please write to us at news@intentamplify.com